The Science of Climate Prediction

INTRODUCTION

In our solar system only the Earth finds itself in the middle of a narrow “Goldilocks” zone. We are not too close to the sun and too hot, nor too far away and too cold. But rather we are at just the right distance for balmy day trips to the beach.

Even more remarkable is the fine-tuning of our orbit given the chaotic chemistry of the earth’s biosphere namely the delicate influence of oceans and atmosphere on global temperature. In any event, the net result of this providence is exactly the right environment for the exquisitely sensitive carbon chemistry of life.

Combining the solar irradiance, or energy output of the sun, with the earth’s distance from the sun, gives us an average temperature of 0 degrees Fahrenheit (-18 degrees Celsius). If this were the entire story, the oceans would have long since frozen solid and life would never have evolved.

But this ignores the Earth’s atmosphere and so is not what we observe. What warms the environment to levels amenable to life are copious amounts of water vapor evaporated from the world’s oceans. Water vapor is our “greenhouse” gas which is transparent to the sun’s incoming visible light but which absorbs and re-radiates outgoing infrared light partially back to the earth’s surface rather than letting it escape entirely into space.

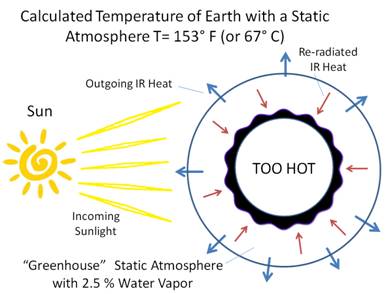

While including the greenhouse effect is more difficult, we can nevertheless calculate the consequent average global temperature. Using the current composition the atmosphere to include the average concentration of water vapor, which is about 2.5%, the average temperature is predicted to be no less than 153 degrees Fahrenheit (67 degrees Celsius) [1]. Note that this calculation uses a static “layered” and un-mixed atmosphere.

Again, this isn’t what we observe but this is because we have ignored the heat loss caused by transient convection currents to include evaporation and condensation, more commonly called the winds and the weather. It is the convective churning of the atmosphere with its jet streams, thunderstorms, hurricanes, clouds and breezes of all descriptions, as well as “negative feedback loops”, that reduces our global temperature to a comfortable 59 degrees Fahrenheit (15 degrees Celsius) [2]. Circulating “Hadley cells” move hot equatorial air from the surface to high altitudes and to the north and south poles. This allows the atmosphere to radiate away heat, efficiently but chaotically cooling the planet.

Unfortunately the current temperature of the Earth cannot be calculated from first principles, or “ab initio”, but rather only measured. This is not the result of any ignorance of atmospheric physics nor can it be corrected with faster computers or more generous research grants.

Rather this theoretical road block comes from an understanding beginning in the 1960’s that the short term weather belongs to a class of problems now called “chaotic.” This draws a curtain on any model prediction of the global atmosphere beyond about 10 to 14 days [3]. This is a theoretical mathematical limit characterized in part by the infamous “butterfly” effect.

And if it is scientifically impossible to build predictive models of the weather in the short term, then it is logically impossible to build predictive models of these same identical weather systems, or climate, in the long term. And of course, any imagined difference between a short term weather model and a longer term climate model, which has the same identical physical properties and is described by exactly the same identical equations (i.e. Navier-Stokes), is non-existent and meaningless.

Hopefully it is also obvious that averaging many simulations, each of which is at best an approximation of theoretically unpredictable quantities, can never eliminate exponentially growing mathematical uncertainties or random bias regardless of the number of trials.

MINOR GREENHOUSE GASES

Earth’s climate is governed by titanic forces far beyond any foreseeable or even possible influence of humanity. Variations in our orbit about the sun the distance to which varies by 3 million miles, the tilt of the Earth’s axis which causes the seasons, the uneven heating of ocean and land at different elevations, all drive the Earth’s chaotic hydrological cycle with clouds and rain and snow and hurricanes. These manifestly and massively dominate all other influences.

Indeed variations in water vapor alone evaporated from the oceans is at least two orders of magnitude more influential than small fluctuations in trace amounts of anything else which are generally measured in parts per million or billion. Nevertheless these other “greenhouse” gases, most notably carbon-dioxide and methane, of which CO2, though nearly insignificant in total effect, is the more important minority constituent are also present in the air.

First note that CO2 exists only in minute quantities, i.e. >0.04% for CO2 versus 1%-4% for water vapor, meaning there is as much as 100 times less CO2. The rarity of CO2 means it is one of the few global features humanity has any influence on.

But lest we become too concerned, equilibrium CO2 concentrations in the air on the time scale of decades are determined only by the relatively massive amount of dissolved CO2 in the oceans. This is strongly a function of ocean temperature and is not a function of anything else.

Indeed, the short term fluctuation from human activities is swamped by natural sources and sinks. Annual cycles of plant growth and decay produce a variable amount depending on rainfall and weather averaging about 114 ppm. This can be compared to the human addition caused by burning fossil fuels which varies depending on economic conditions but currently averages about 4.4 ppm. Along with emissions from volcanoes these miniscule amounts are quickly re-absorbed by natural sinks for a net annual increase from all sources of only 2 ppm. Clearly excess CO2 is easily re-absorbed.

And observations support the oceanic control of the atmosphere. For instance, it is telling that net CO2 increases do not track variations in human emissions but are strongly correlated to surface heating by El Nino events and to cooling by volcanic eruptions. Indeed from the simplest of considerations, human contributions cannot contribute more than 1/3rd of the total annual CO2 increase [4] which over decades is completely swamped by oceanic equilibrium.

Also considering the diffusivity of CO2 in ice cores, so that spikes over only a few thousands of years are preferentially erased from the historical record, it is not reasonable to suggest that current CO2 levels are unique or even unusual. But this is somewhat moot because CO2 is now close to the lowest it has been over the last 250 million years. And the most recent spike, much above current levels, was some 12,500 years ago.

![[image277.gif]](Science%20of%20Climate%20Prediction_files/image004.png)

Nor does excess CO2 have a long residence time in the atmosphere before equilibrium is again achieved with the world’s oceans. The oceans vastly overwhelm all other sources of CO2 emissions to include paltry human sources with a decay time of less than about 6 years [4].

But in rebuttal, global warming advocates claim CO2 is not naturally eliminated from the atmosphere but remains for hundreds to thousands of years in a rejection of the undeniable physics and actual observations. A favorite mantra is that absorption into rocks is a process requiring millennia ignoring vegetation and oceans which operate within a few months to years.

Methane is a much more potent greenhouse gas but is so extremely rare (in parts per billion) as to be significantly less important than even carbon dioxide. Ozone and nitric oxides also make extremely small contributions.

ABSORPTION SPECTRUM OF CO2

It is interesting to note, if not ultimately definitive, that the heat retention effect of a single CO2 molecule is less than about one-eighth that of a single molecule of H2O. In this sense, and to first approximation, CO2 is not really a greenhouse gas at all [5].

This is because in H2O the atomic arrangement is asymmetric so that the center of negative charge is displaced from the center of positive charge. This forms a permanent electric field called a “dipole moment” that strongly interacts with heat in the form of IR electromagnetic radiation. In dramatic contrast, the atoms in CO2 are collinear without a permanent dipole moment (only a much weaker quadrupole moment). So it is only when thermal vibration and rotation jiggle CO2 atoms out of place that there is any pitifully small interaction with IR. Also the hydrogen atoms in H20 and methane are lighter than the carbon and oxygen in CO2 and have a much wider range of motion.

But this is not definitive because even the barely measurable trace amounts of CO2 now present absorb all of the earth’s outgoing infrared heat radiation that it is possible for them to absorb. And the massively greater amounts of water vapor perform the same function but in a much, much, shorter distance. But what this does mean is that any infrared radiation that CO2 might have absorbed in various frequency bands is already absorbed by water vapor. This alone overwhelms CO2’s contribution making it even more insignificant, if that is still possible.

There are admittedly a few narrow absorption bands where only CO2 is active (e.g. around 4 microns) but they do not contribute more warming with increasing CO2. This is because heat absorption and re-radiation is not linear with concentration but rather logarithmic. Fully half the total, but tiny, CO2 warming effect comes from the first 20 parts per million (about 1/20th of current concentrations). That is to say at each stage, to get the same heating as before, the amount of CO2 has to be repeatedly doubled. The current time for mankind’s CO2 contributions to amount to a doubling the CO2, assuming no elimination from natural sinks, is roughly 100 years but is actually impossible because we will first run out of fossil fuels.

Thus at this point adding more CO2 has almost no effect whatever. This is called “saturation” and is similar to applying more and more black paint to a window. Beyond some point, more black paint can’t really make the room any darker.

In fact, the only real effect of more CO2 is to slightly change the height at which water vapor, which is our only significant greenhouse gas, absorbs and retains heat. This very slightly and inconsequentially changes the area of the sphere which radiates heat away in to space.

GLOBAL HEATING CAUSED BY CO2

In rebuttal, advocates of catastrophic warming hypothesize that excess CO2 might have a significantly larger indirect effect by triggering a positive feedback loop in the atmosphere, perhaps by increasing water vapor. Unfortunately it is not theoretically possible to calculate this and, failing that, has not been observed by NOAA satellites over the last several decades. Rather real world observations unmistakably show less water vapor in the upper atmosphere, where it is more influential, again demonstrating the expected negative feedback loop [6].

Please note that, because no transient temperature fluctuations have yet caused the earth to catch fire and melt, negative feedback loops must be present as well as strongly influential, all other considerations aside.

The combined result of these straightforward considerations is that CO2 from all sources only contributes less than 2%-3% of earth’s total greenhouse effect. And assertions that CO2 would be much more influential if there weren’t any water vapor are nonsensical because we live on a water world and couldn’t get rid of the oceans no matter how much some might want to do so.

These straightforward considerations demonstrate the upper limit on human-caused (i.e. anthropogenic) global warming is almost certainly too small to measure from back of the envelope calculations based on the unabridged data published by the United Nations IPCC [7].

CHAOTIC MODELLING THEORY

But it gets worse because despite all the rhetoric, the only reason human-caused global warming has been seriously considered is because of computer models.

Modeling chaotic systems is impossible because large increases in measuring the accuracy of the initial conditions only slightly extends the time before the calculations and reality diverge. The net effect is that the window of predictability asymptotically approaches a finite limit even as the accuracy of measuring the initial conditions increases without limit [8-9].

The blue line shows roughly how many days into the future global temperatures can be accurately predicted as a function of how well we know the present state of the atmosphere. That is if we have three decimal places of accuracy, we have measured the initial conditions to one part in one thousand and for six decimal places, to one part in a million. The red line is the theoretical maximum limit of accurate predictions also called the “asymptotic limit.”

Hopefully it is also obvious that running weather models longer than about two weeks, which results in theoretically wrong values in each case, will not “average-out” errors if repeated over many computer runs. To believe otherwise is “magical” rather than mathematical thinking.

In more technical terms, the erroneous, but oft expressed thought, is that if a climate model includes most of the physical processes, then even though its trajectory through phase space diverges from what actually happens, e.g. model thunderstorms are ignored or go in the wrong direction, model daily temperatures are grossly inaccurate, model cloud cover is laughably off the mark, and so forth, nevertheless enough of phase space might be sampled to calculate about the same simple average values as for the real weather or climate.

To some extent this forlorn hope is born out of those calculations, both static and dynamic, which are possible. We can for instance calculate the equilibrium pressure, temperature, and volume, for a sample of the atmosphere. We can model the smooth flow of air over an airplane wing and can even approximate turbulent flow.

The problem with the weather is that its numerous and non-linear feedback loops trigger chaotic non-repeating trajectories through phase space which are in principle unpredictable. This is because even one small chance occurrence will exponentially expand its consequences to drastically alter averages across the globe and on all time scales.

This fact invalidates the possibility that linear arithmetic averages of temperature in any conceivable climate model with exponentially increasing errors will faithfully approximate the same non-linear and highly dynamic values in the real world. And this is mathematically rigorous and supported by many observations [8-9].

Thus there is a fixed time beyond which no prediction is possible regardless of the computing resources, regardless of the quality of the model, and even regardless of one’s knowledge of the initial conditions. This coupled with the extreme sensitivity of chaotic systems to random chance, i.e. the oft-quoted “butterfly” effect [8-9], makes computer approximations worthless as predictors of weather or even climate based on human scale political policy changes.

Global warming advocates spin this by saying that climate modeling is hard or that it is very difficult. These are misrepresentations. Rather prediction is impossible. Will it rain on your party two weeks from now? Will there be an abundance of hurricanes or none at all next year? Will the date of the last killing frost, heralding the start of the planting season, come early or late? Will increasing trace amounts of CO2 by miniscule amounts cause global temperatures to increase over the next several decades or will global temperatures instead decrease? No possible computer simulation can tell you and not because we know too little science but rather because we know too much. Again, the global atmosphere is mathematically “chaotic.”

MODEL INACCURACY

Aside from undeniable theoretical limits, all climate models are also unable to model, and thus choose to ignore, such effects as clouds, thunderstorms, hurricanes, and so forth, as well as their associated feedback loops. These ignored effects, especially of clouds, amount to some 60%-80% of the earth’s total energy flow [10], which is what undeniably dominates global temperatures. Processes acting over short times at millimeter lengths are approximated by grids with spacing of many kilometers and time steps of many hours.

This is a problem for alarmists because predictions of catastrophic global warming assume model accuracies of many orders of magnitude (i.e. frequently calculating differences between incoming and outgoing energy flows which differ by as little as a part per million) beyond what any computer resources currently provide or are likely to provide in any foreseeable future.

PARAMETERS AND THE ABANDONMENT OF SCIENCE

Nevertheless, the alarmist argument is that non-linear, dynamic, and strongly interacting weather processes, e.g. advection because of cloud cover or thunderstorms as a function of excess CO2, can be approximated by curve-fitting polynomials which are entirely divorced from the physical processes themselves. Instead of using fine scale grids and the Navier-Stokes equations of basic physics, which would numerically create winds, clouds, rain, hurricanes, and temperature changes in the computer, instead entirely arbitrary (“informed” guess-work) polynomials are substituted to estimate the relationships between literally thousands of different weather processes each with its unknown adjustable magnitude.

Most of these relationships are not thought to be important and thus are omitted. But not only are interaction strengths continuously debated, being increased ten-fold in some models and eliminated entirely in others, but entirely new weather processes themselves are being continuously introduced. With the underlying principles of physics eliminated from weather and climate models, intuition rules and anything goes.

This technique of starting with unrealistically large scale Navier-Stokes grids, and then neglecting clouds and hurricanes and whatnot, typically results in obviously nonsensical model behavior. Sanity of sorts is restored by inserting adjustable but entirely arbitrary “fudge factors” for the most of physical processes which is called “parameterization.” Repeatedly tweaking the necessarily large number of parameters will eventually cause the model to match historical records.

But if the CO2 concentration in the curve fitting exercise were replaced by a different independent variable such as the number of cheeseburgers consumed annually (which has also been increasing), the effect would be exactly the same. And since some government weather stations, which were found in farmer’s fields decades ago, are now located behind the exhaust outputs of fast food restaurants, cheeseburger counts might actually provide a better pseudo-linear fit to real physical processes (i.e. the CHEEZEBURGER effect ☺).

The point is that any arbitrary continuous curve (to include increasing CO2 or cheeseburgers) can always be mapped via Kalman-like filters or Monte Carlo approximations into any other arbitrary curve e.g. by tweaking the parameters of an arbitrary polynomial. This fact makes the assertion, i.e. that increasing CO2 levels are the only possible cause of changing temperature records, simply wrong.

Since climate models are at best bad curve fits to chaotic data, which are parameterized and divorced from the science, and since the arbitrariness of curve fits is so well known in science and engineering, it is difficult to understand why alarmists to include news reporter friends continue to parrot contrary non-sense. This point was affirmed decades ago by John von Neumann who noted “With four parameters I can fit an elephant and with five I can make him wiggle his trunk” (Attributed to von Neumann by Enrico Fermi, as quoted by Freeman Dyson in “A Meeting with Enrico Fermi” in Nature 427, (22 Jan. 2004), page 297.)

An associated problem is that there is no mathematical certainty any particular set of fudge factors is the best possible guess; rather there will likely be a very large number of such sets, i.e. an astronomical number of local minima in phase space (i.e. grossly over-determined). And each set will yield a different prediction outside of the curve fitting region which is one reason why all the different models give such widely distributed and non-physical results.

REAL WORLD OBSERVATIONS

But the definitive consideration is that historical temperature records show no correlation at all to observed CO2 levels; whereas other factors, e.g. sunspot cycles, have strong correlations. And this is true over geological time scales using proxy data as well as over the last two centuries from surface measurements as well as over the last 4-5 decades of satellite measurements. Over geologic time, CO2 lags every major temperature change by up to 1000 years as warming oceans grudgingly give up dissolved gasses. In real science, an effect cannot happen before its cause. In all cases CO2 levels increase as oceans gradually release CO2 as they warm (i.e. NO causal correlation) [11]. To believe CO2 affects the climate, one must believe that CO2 cools the earth because the strict mathematical correlation is negative.

The net result is that curve fits, which ignore the underlying physics, have no predictive power as inputs to the model change. In any event, and as one should expect, all climate models created to date have been unable to predict temperatures beyond a few days [12], i.e. beyond the range used for “curve fitting” and either into the past or future. Indeed for the entirety of the 21st century (since about 1998), no climate model has predicted the slight decline in global temperatures in the face of dramatically rising CO2 concentrations. In real science, such predictive failures usually mean the models and the theories supporting them are wrong.

CONCLUSIONS

The public is not generally informed that global warming predictions heralded by alarmists in the popular press are grossly inaccurate and unscientific estimates at best, if not outright fabrications. And that to maintain the hysteria and government funding, calculations are arbitrarily tweaked to magnify the originally undetectable swings in model temperatures.

Rather the definitive scientific truth is, that if one effectively takes a ruler and draws a straight line through chaotic data, be it historical temperatures or stock prices, that trend line has no predictive power either in theory or in practice. In light of this cold hard fact, claiming climate models have any validity beyond numerical experiments to estimate coarse relationships between crudely modeled weather processes is not only unscientific but willfully ignorant.

Claims to the contrary lack both theoretical and empirical foundation, except of course in politically inspired demagoguery. Thankfully in a free country, one might still profess a blind faith in liberal politicians promoting human caused global warming for a variety of even good reasons, but it is not science [13].

The only benefit of climate models is to frighten a gullible and scientifically illiterate public into accepting massive tax hikes to fund policies that incredibly would have no measurable effect even if fully implemented [14]. Realizing this, politicians typically divert these funds to other good causes. Does anyone think that we need more science education and fewer lies?

REFERENCES

1. Our Affair With El Niño, chapter 7: Constructing a Model of Earth's Climate, page 105.

http://www.globalchange.gov/browse/multimedia/sea-surface-temperatures-cloud-ice-and-water-vapor

2. http://www.drroyspencer.com/global-warming-101

A major component is hot air rising at the equator which pulls in cold air from the poles which more effectively radiates heat into space. These “Hadley cells” coupled with the “Coriolis effect” cause Northern hemisphere winds to be mostly from the north-west and Southern hemisphere winds to come from the south-west.

3. http://www.technologyreview.com/article/422809/when-the-butterfly-effect-took-flight/

4. https://www.youtube.com/watch?v=5g9WGcW_Z58 https://www.youtube.com/watch?v=2ROw_cDKwc0

http://geocraft.com/WVFossils/GlobWarmTest/A6a.html

http://www.geocraft.com/WVFossils/Reference_Docs/PMichaels_Jun98.pdf

6. http://wattsupwiththat.com/2013/03/06/nasa-satellite-data-shows-a-decline-in-water-vapor/

The specific humidity [in the upper atmosphere] has declined by 14% since 1948 using the best fit line.

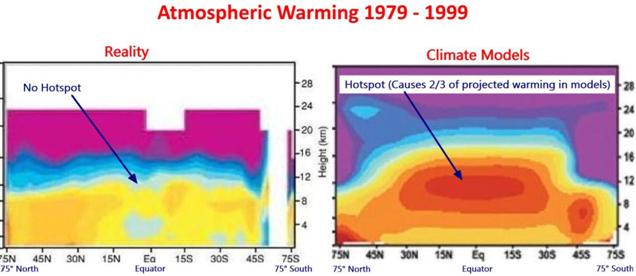

Climate models predict a hot spot of enhanced warming rate in the tropics, 8 km to 13 km altitude. Radiosonde data shows the hot spot does not exist. Red indicates the fastest warming rate. Source: http://joannenova.com.au

http://hockeyschtick.blogspot.com/2012/03/water-vapor-not-co2-controls-climate.html

http://motls.blogspot.com/2009/07/climate-feedbacks-from-measured-energy.html

http://www.leif.org/EOS/2009GL039628-pip.pdf

http://www.sciencedaily.com/releases/2012/02/120222114358.htm

http://www.americanthinker.com/blog/2015/02/the_fatal_flaw_of_global_warming.html

[ The following data table is taken directly without modification from the IPCC Published Report TAR3, Chapter 6. Radiative Forcing of Climate Change: Section 6.3.4 “Total Static Layered Atmosphere Calculation of Temperature Increase for Greenhouse Gas Forcing” ]

The calculations for global temperature increases in degrees Centigrade and Fahrenheit for cumulative increases in CO2 in parts per million for a STATIC atmosphere without weather and BEFORE convection and before the observed negative feedback effects are included, are as follows:

|

CO2 ppm |

Cumulative °C |

Extra °C |

Cumulative °F |

Extra °F |

|

0->100 |

2.22 |

2.22 |

4.00 |

4.00 |

|

100->200 |

2.51 |

0.29 |

4.52 |

0.52 |

|

200->300 |

2.65 |

0.14 |

4.77 |

0.25 |

|

300->400 |

2.71 |

0.06 |

4.88 |

0.11 |

|

400->600 |

2.79 |

0.08 |

5.02 |

0.14 |

|

600->1000 |

2.85 |

0.06 |

5.13 |

0.11 |

Note that mankind’s total contribution of CO2 is less than 1/3 of the net increase over the last few centuries as CO2 amounts rose from 280 ppm to 400 ppm. The remaining 2/3rds (or more) is due to out-gassing from the world oceans. The total temperature increase due this CO2 increase from all sources from the IPCC data above is 0.16°F.

This means the upper bound on mankind’s total increase in global temperatures because of CO2 from the beginning of time is less than about [(1/3) * 0.16°F] or 0.05°F.

But this is before the negative feedback loops of the weather/climate causing conductive heat loss, are considered. Please recall that chaotic convective cooling reduces STATIC atmospheric greenhouse heating caused by H20 from >153 [calculated] -> 59 [measured] degrees Fahrenheit. Similar effects MUST apply to CO2 and REDUCE the heating calculated above by about the same ratio.

In rebuttal, alarmists make the following claims

a) For a DOUBLING of CO2 [from 280 to 560 ppm] the calculated temperature increase for a static atmosphere (from the IPCC data) is 0.27°F

b) All of the CO2 concentration change is caused by people ignoring contributions from a warming ocean which is the sole control on CO2 levels over decades.

c) Although the observed temperature change caused by the weather for H2O is massively negative (negative feedback), somehow these same processes cause a massively positive(positive-feedback) increase in the temperature for CO2.

d) Without numerical foundation, alarmists hypothesize (i.e. pull out of thin air) that this positive feedback for CO2 is perhaps a factor of three as they imaginatively tweak their weather-climate models.

e) This means humans might heat the Earth by a full ONE DEGREE [1 * (0.27° F) * 3] over the next century or more.

In contradiction to all real world evidence, it’s hard to say something nice about this fraud considering the massive waste of monies by government bureaucracies going to a veritable industry of fat cat politicians and friends.

[Note that for a static atmosphere, the TOTAL temperature increase due to water vapor is at least 153° F (and possibly as much as 170° F) and that of CO2 is 4.88° F (from published IPCC data). This implies the relative effect of CO2 is 2-3% for the real atmosphere.]

8. http://www.bibliotecapleyades.net/ciencia/ciencia_climatechange25.htm

9. Sir James Lighthill, F.R.S., “The recently recognized failure of predictability in Newtonian dynamics”, Proc. R. Soc. Long. A 407, 35-50 (1986).

Professor Lighthill held the Lucasian Chair at Cambridge University, England, that was previously held by Sir Isaac Newton and is currently held by Stephen Hawking.

Some excerpts include:

“Here I have to pause, and to speak once again on behalf of the broad global fraternity of practitioners of mechanics. We are all deeply conscious today that the enthusiasm of our forebears for the marvelous achievements of Newtonian mechanics led them to make generalizations in this area of predictability which, indeed, we may have generally tended to believe before 1960, but which we now recognize were false. We collectively wish to apologize for having misled the general educated public by spreading ideas about the determinism of systems satisfying Newton’s laws of motion that, after 1960, were proved to be incorrect.

…

Systems subject to the laws of Newtonian dynamics include a substantial proportion of systems that are chaotic (e.g. Meteorology); and that, for these latter systems, there is no predictability beyond a finite predictability horizon. We are able to come to this conclusion without ever having to mention quantum mechanics or Heisenberg’s uncertainty principle.

…

For example, there might be some other discipline where practitioners could be inclined to blame failures of prediction on not having formulated the right differential equations or on not employing a big enough computer to solve them precisely, nevertheless however accurately the initial conditions may be observed, prediction is STILL impossible beyond a certain predictability horizon.

…

[In comments: Perhaps I should make it clear that the results I described are not ‘scientific theories’. They are mathematical results, based upon rigorous ‘proof’ in the mathematical sense… and have the same immutability as all the 2000-year old geometrical theorems about the consequences of assuming Euclid’s axioms.]”

A relatively simple college text book on “chaos” with applications to electronics in Electrical Engineering which must today routinely deal with such real life effects is

"Nonlinear

Dynamics And Chaos: With Applications To Physics, Biology, Chemistry, And

Engineering", Steven H. Strogatz CRC Press (2000); ISBN-10: 0738204536; ISBN-13: 978-0738204536.

10. “The Physics of Atmospheres”, by John Houghton, March 25, 2002 , ISBN-13: 978-0521011228 and ISBN-10: 0521011221, page 41;

which states in part: “Clouds are, in fact, probably the dominant influence in the radiative budget of the lower atmosphere but adequately taking them into account raises many problems …”.

http://www.climate4you.com/ClimateAndClouds.htm

which states in part: “The cloud forming processes take place on fractions of a millimetre, while global climate models typically operate with a grid size of 50-100 km… and the total global cloud cover reached a maximum of about 69 percent in 1987 and a minimum of about 64 percent in 2000… These observations leave little doubt that cloud cover variations may have a profound effect on global climate and meteorology on almost any time scale considered “

“We are nowhere close to knowing where energy is going or whether clouds are changing to make the planet brighter ... We are not close to balancing the energy budget.” Kevin Trenberth, National Center for Atmospheric Research, USA

“Basic problem is that all models are wrong - not got enough middle and low level clouds”. Phil Jones, Director of Climate Research Unit, UEA, UK.

“We only understand 10 percent of the climate issue. That is not enough to wreck the world economy with Kyoto-like measures”. Henk Tennekes, former research director, Dutch Royal Meteorological Institute

“The fact is that we can't account for the lack of warming at the moment and it is a travesty that we can't”. Kevin Trenberth, National Center For Atmospheric Research, USA

“We can no longer absolutely conclude whether globally the troposphere is cooling or warming relative to the surface”. Thorne et al, BAMS Oct 2005

“It is interesting to see the lower tropospheric warming minimum in the tropics in all three plots, which I cannot explain. ...it is remarkably robust against my adjustment efforts”. Leopold Haimberger, Department of Meteorology and Geophysics, University of Vienna

“But it will be very difficult to make the MWP [Medieval Warm Period] go away in Greenland”. Henry Pollack, University of Michigan

“The data doesn't matter. We're not basing our recommendations on the data. We're basing them on the climate models.” Prof. Chris Folland, Hadley Centre for Climate Prediction and Research.

“I doubt the modeling world will be able

to get away with this [tuning] much longer.” Tim Barnett, Scripps

Institution of Oceanography, USA

“The models are convenient fictions that provide something very useful.” Dr. David Frame, climate modeler, Oxford University

11. http://www.co2science.org/subject/c/summaries/co2c...

12. http://www.climatism.net/wp-content/uploads/2013/11/Why-the-Climate-Models-are-Wrong.pdf

http://a-sceptical-mind.com/why-the-ipcc-climate-model-is-wrong

Connie Hedegaard European Commissioner on Climate Change

“No matter if the science is all phony, there are collateral environmental benefits... Climate change provides the greatest chance to bring about justice and equality in the world”.

Christine Stewart, former Canadian Minister of the Environment as reported in Calgary Herald, December 14, 1998.

“We've got to ride the global-warming issue. Even if the theory of global warming is wrong, we will be doing the right thing, in terms of economic policy and environmental policy.”

Timothy Wirth, former Democrat Senator from Colorado and Clinton Administration Under Secretary of State for global issues; quoted in Science Under Siege by Michael Fumento, 1993 and National Journal interview, 1990. . Wirth now heads the U.N. Foundation which lobbies for hundreds of billions of U.S. taxpayer dollars to help underdeveloped countries fight climate change.

“A global warming treaty [Kyoto] must be implemented even if there is no scientific evidence to back the [enhanced] greenhouse effect”.

Richard Benedick, deputy assistant secretary of state, USA and representative to the IPCC Conference in Rio.

“We need to get some broad based support, to capture the public's imagination... So we have to offer up scary scenarios (about global warming), make simplified, dramatic statements and make little mention of any doubts... Each of us has to decide what the right balance is between being effective and being honest." Stephen Schneider, Stanford Professor of Climatology and lead author of many IPCC reports.

“There is no scientific proof that human emissions of carbon dioxide are the dominant cause of the minor warming of the Earth’s atmosphere over the past 100 years.” Patrick Moore, Canadian ecologist and co-founder of the militant environmental group Greenpeace in testimony before the Senate Environment and Public Works Committee.

The United Nations founded the IPCC in order to extract trillions of dollars in “climate change reparations” from the US. In 1996, they issued a report on global warming but after the scientists had approved the final draft, the UN bureaucrats secretly decided the report was not scary enough to attract any attention by “true believers”. So they arbitrarily DELETED the following two statements by the actual scientists, to wit

1. “None of the studies cited above has shown clear evidence that we can attribute the observed climate changes to increases in greenhouse gases.”

2. “No study to date has positively attributed all or part of the climate change to man–made causes”

http://stephenschneider.stanford.edu/Publications/PDF_Papers/WSJ_June12.pdf

“Some people will do anything to save the earth . . . except take a science course”. P. J. O'Rourke, author, journalist.

14. “Hubris: The Troubling Science, Economics, and Politics of Climate Change” by Professor Michael Hart.

“Again, it will take a determined effort by people of faith and conscience to convince our political leaders that they have been gulled by a political movement exploiting fear of climate change to push a utopian, humanist agenda that most people would find abhorrent. As it now stands, politicians are throwing money that they do not have at a problem that does not exist in order to finance solutions that make no difference. The time has come to call a halt to this nonsense and focus on real issues that pose real dangers. In a world beset by war, terrorism, and continuing third-world poverty, there are far more important things on which political leaders need to focus.”

FIGURES

Despite alarmist claims, no model has yet been able to predict global temperatures, especially for the last two decades. Nor do measured global temperatures show any striking recent increase. It is almost as if (but as one might expect) excess CO2 doesn’t correlate with global temperatures because the effect is too small to measure.

Perhaps the massive temperature increase predicted by alarmist models was stolen by wood nymphs, or suddenly went into hiding at the bottom of the ocean and is still undetected [see graph of ACTUAL ocean temperatures below], or maybe, just maybe, was never there to begin with….

http://www.drroyspencer.com/latest-global-temperatures/

http://www.friendsofscience.org/index.php?id=453

http://www.esrl.noaa.gov/gmd/ccgg/trends/

https://www.youtube.com/watch?v=0gDErDwXqhc

http://eesc.columbia.edu/courses/v1003/lectures/solar_radiation/